At small scale, Amazon API Gateway feels clean.

You define routes. You deploy. You map a custom domain. Done.

At SaaS scale - especially multi-tenant, multi-service SaaS - it starts pushing back.

We hit that wall.

Not because API Gateway is bad. It isn't. Amazon API Gateway is an excellent managed edge service. But like every managed abstraction, it comes with boundaries. And those boundaries matter when you're building a system where microservices deploy independently and tenants are isolated into deployment "cells."

This is the story of how a weekend rewrite turned into a cleaner, more scalable API architecture.

The Friction Nobody Mentions

Microservices are supposed to reduce coupling.

But if multiple services deploy into the same API Gateway, you've just reintroduced coupling - just at the infrastructure layer instead of the code layer.

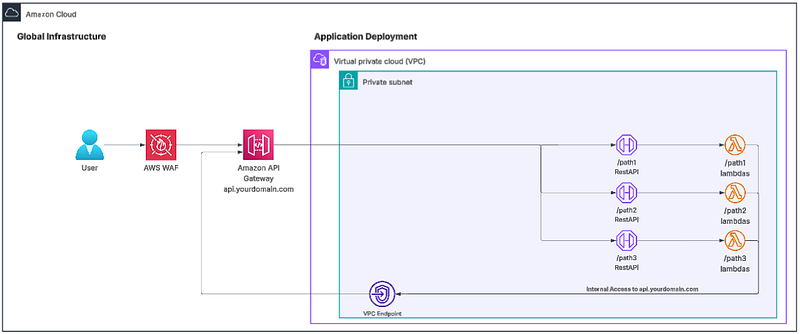

We tried the obvious approach first: a shared RestAPI. Each service owned its own base path. Routes were cleanly separated. On paper, it looked fine.

In practice, every deployment mutated the same API definition.

CloudFormation stacks became subtly order-dependent. A failed deployment could leave routes in an inconsistent state. Rolling back one service sometimes meant redeploying another. The blast radius started growing in ways that felt… wrong.

Microservices shouldn't feel fragile.

So we split things apart.

We moved to multiple APIs under a single domain. That works - technically. But now every service must live under its own nested base path. You can't map multiple RestAPI's root-paths into the root. APIs are no longer well organized for the consumer. It becomes a configuration management exercise instead of a clean ownership model.

Then we tried something that was "correct" but messy: one RestAPI per root-level path. This avoided having to nest paths for each service. /tenants had its own API. /users had its own API. Even when both belonged to the same microservice.

It worked. It was also a deployment headache.

More mappings. More monitoring. More places for drift. We quickly reached over 20 RestAPIs across 3 different data planes and only two microservices! The API Gateway scaling limit shifted from Resource limits per API, to the number of Base Path Mappings allowed in an account.

It wasn't broken - but it didn't feel scalable.

The Weekend Rewrite

I blocked off a long stretch of time and decided to stop fighting the service limits.

Instead of forcing API Gateway to behave like a shared edge router, I built one… In Rust. A language I had never written before.

Using AI-assisted tooling to accelerate implementation (AWS Kiro and Claude Opus 4.5), I built a light-weight deterministic API router, worked through creating the CICD and deployment scripting, and had it deployed and operational in under 72 hours.

It lives in ECS/Fargate behind an ALB. It doesn't try to be clever. It doesn't try to be dynamic in dangerous ways. It simply routes predefined base paths to predefined internal APIs.

The key rule: the caller never controls the destination host.

Routing targets are predetermined configuration - first YAML, eventually DynamoDB. The router performs longest-path matching, rewrites host headers, forwards tracing headers, and blocks obviously dangerous targets like localhost or metadata endpoints.

It is intentionally constrained. This isn't a generic reverse proxy. It's a controlled internal switchboard.

The New Shape

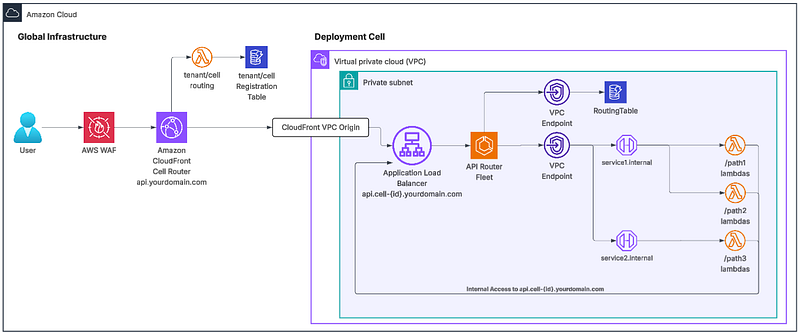

The final architecture looks like this:

Requests flow through WAF and Amazon CloudFront. A Lambda@Edge function determines which deployment cell a request belongs to. From there, traffic moves through an ALB to the Rust router running in ECS/Fargate. The router forwards the request through a VPC Endpoint to a private API Gateway.

And that last part is the real architectural shift.

Each service now owns its own private API Gateway.

- No shared RestAPI definitions.

- No cross-stack mutation.

- No shared resource limits.

Every API is private. Every API is reachable only through a VPC endpoint. Every service deploys independently.

Why This Feels Better

Traffic now moves through ALB and a small ECS service before reaching API Gateway. It's internal traffic, so the latency cost is negligible compared to what we gained.

What changed isn't performance - it's ownership.

Each service deploys its own private API Gateway. No shared definitions. No cross-stack mutations. No shared resource limits. No deployment order anxiety.

The architecture finally reflects the intent of microservices: isolation by default.

It's not a flexible proxy. It's a controlled switchboard. That difference matters.

##The Lesson We didn't remove API Gateway. We stopped forcing it to be the global edge router for a distributed system.

Once routing and service ownership were separated, everything got simpler.